Implementing Taylor Series in APL

What problem are we trying to solve?⌗

Have you ever wondered how does your favourite programming language’s standard library compute the values of , and other trigonometric functions?

There are many ideas that might come to your mind. First, we might use the reflective property for negative arguments (e.g. holds), to bring the argument into range . Then, the full period shift could be used to further reduce the range to , yielding the for formula. While there are more interesting tricks to further reduce the domain for easier computations, we can’t repeat these steps forever.

Using just mathematical insight, we’ve established that computing for is enough to tell the values of for all . There are many possibilities to follow from there, for example, using the ridiculous (but valued by engineers) approximation combined with some more properties:

Although, such an approximation is generally too inaccurate for many use cases, so a different method has to be used. Currently, the values of are nuemrically approximated using two mathematical devices - the Chebyshev polynomials and the Taylor series.

Since it’s not the first time Chebyshev polynomials are a little star of my blog post, today I’d like to demonstrate my implementation of the Taylor series in APL.

What is Taylor Series anyway?⌗

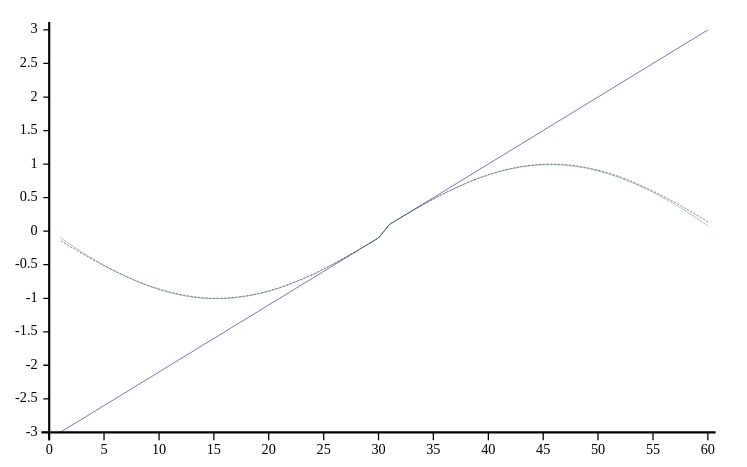

Assuming that the -th derivative at point of computable, it is possible to express the function as an infinite sum of terms using the Taylor Series. Since infinite sums are generally not useful to numerical methods, we (almost) always use a partial sum formed by the first few terms of a Taylor series. Taylor polynomials (for a given , where terms of a Taylor series is a polynomial of degree ) are approximations of a function, which become generally better as increases.

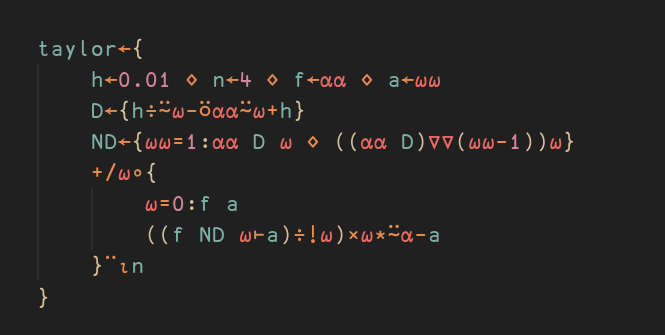

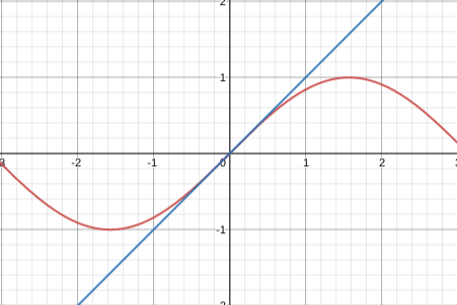

A famours approximation of derived using Taylor Series is demonstrated below.

Implementing Taylor Series.⌗

The general formula for Taylor series is fairly straightforward and it’s the basis for my implementation in APL:

The first immediate concern is computing the values of the -th derivative of . Recalling the definition of a derivative:

I arbitrarily pick a small value of and thus approximate the derivative in point:

It’s not the end of the story just yet, though, since it’s not just the first derivative what’s needed. An important (and somewhat trivial) observation is that one can apply the operator times to get the -th derivative!

Verifying the computations, , and finally , so the results seem to be correct. Unfortunately, there is no way to apply an operator given amount of times to a function (analogically to the existing ⍣ for functions), so I had to roll my own recursive -th derivative function:

In the end, it’s nothing too complicated - first derivative marks the end of recursion, the second derivative is the first derivative of the first derivative, and so on. Additionally, I decided to hardcode the degree of the Taylor polynomial that is going to be computed using n←4.

Assuming the ⍵ parameter to the taylor function is the point at which an existing taylor polynomial is evaluated, my implementation is almost ready:

The final line will map a function that generates Taylor polynomial terms to build the final approximation at point. Recalling the expression above, the implementation will diverge into two branches:

The 0-th term of the Taylor polynomial is always , while the next ones follow the standard formula:

where is the -th derivative of at point .

Testing⌗

I test my implementation as follows:

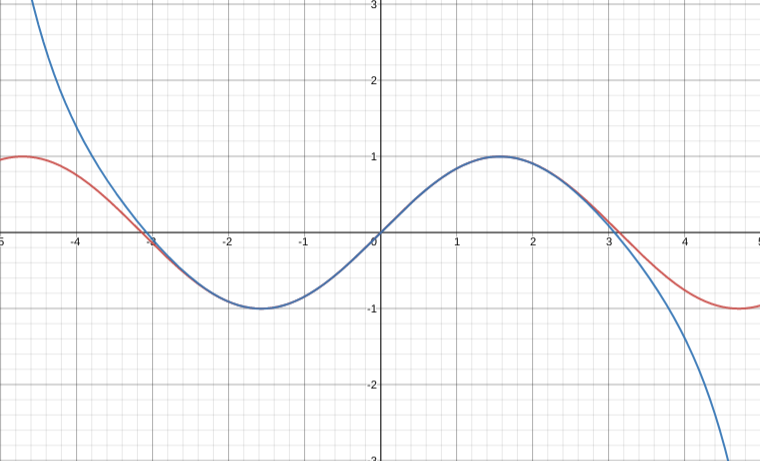

It appears that my implementation is fairly close to being acceptably accurate, but I can make it better by increasing the Taylor polynomial degree to n←7:

Summary⌗

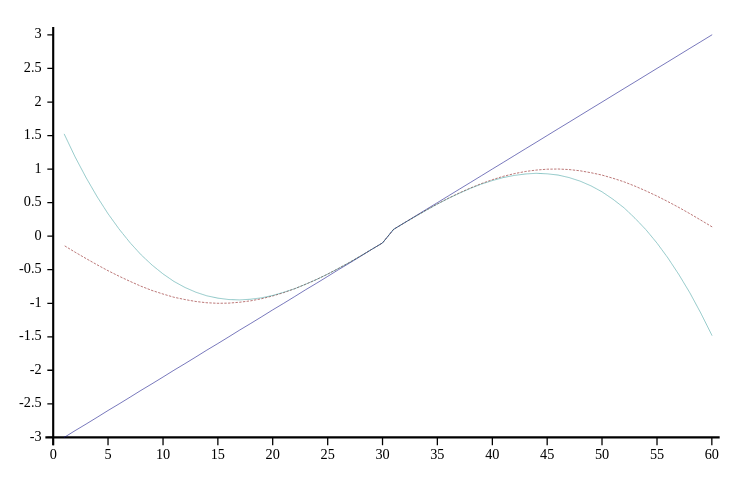

I believe that this tiny Taylor Series implementation in APL is somewhat unique, since it conveys many concepts of mathematics in an almost verbatim way. There’s an incredibly obvious link to notice between the last line of my implementation:

… and the actual mathematical formula:

It’s a proof (or rather a demonstration) of sorts that APL is well-suited for representing concepts in mathematics that overlap with numerical methods and actual computation. Finally, the full source code follows: